Sharing state between threads, to create an audio engine

In this short article, we'll take a look at some high-level concepts involved in sharing state across an application which can run on multiple CPU cores at once - under the pretense of creating music software, as it's a nice analogy and relevant to my personal projects! We'll also look at how to get around various bottlenecks and problems only multi-threaded applications usually have. As a high-level description, we'll not be looking at any code, as the topic could be applied to any language.

Multi-threading, in general

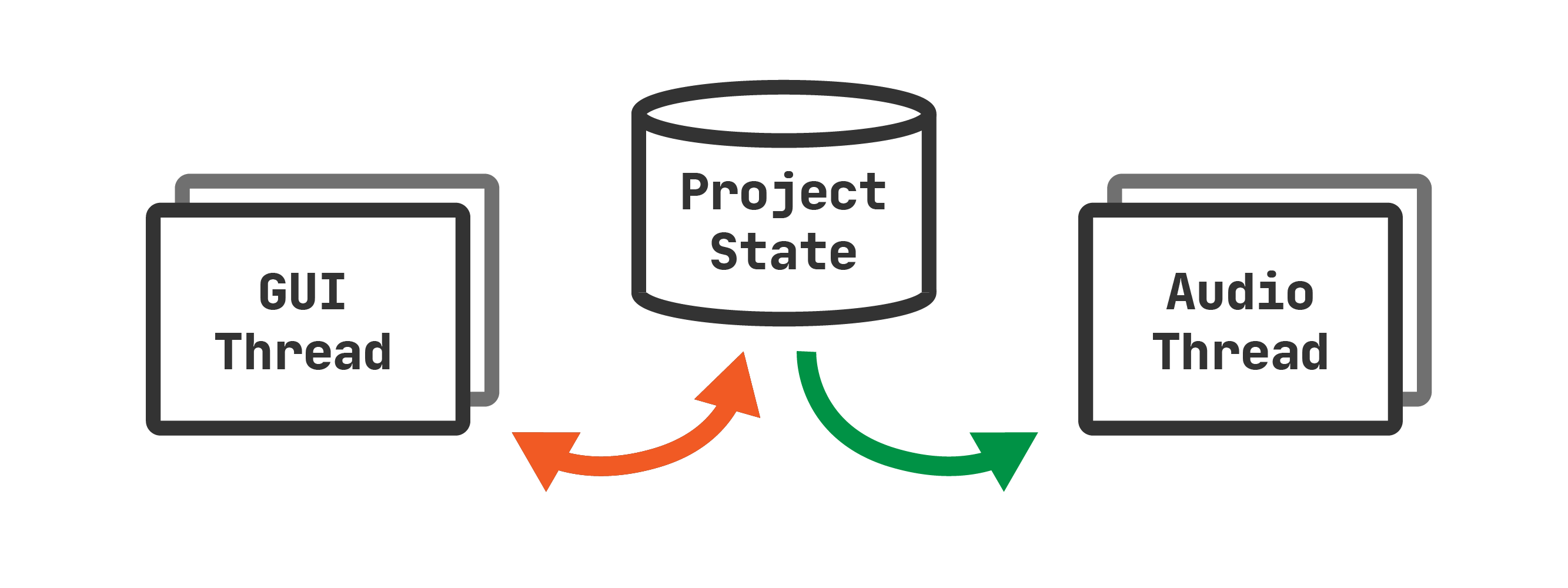

Let's assume you already have an application set up with a graphical user interface, and you have an area of memory dedicated to the project data (including audio files, effects, etc.). Regardless of whatever your project entails, we can look to separate this state from the GUI. To generate audio, you'll need a function running separately and continuously from the GUI, in another thread. This thread is solely dedicated to converting the state of the application into audio, therefore separating it from the GUI allows it to be highly performant and keep running even if the GUI slows down. At the end of this process, we'll have one data source and two threads, as seen in figure 1 below.

In this diagram, you can see that the GUI thread and audio thread are related to the data source in different ways. The GUI thread needs to both read and write to the data source (such as changing slider values, or adding new instruments), whereas the audio thread only needs to read from the data source - it does not need to change anything about the project to generate an output.

Sharing state between threads

With multiple threads, there are multiple ways to share data between them. For

very small pieces of information, such as a single number, this can be shared

using "atomic" variables (eg. AtomicUsize in Rust,

AtomicInteger in Java, etc.). For larger stores of information,

especially for the size some music software will end up growing into, we'll

need to use another method.

Mutual Exclusion (Mutex)

First, there's Mutex. A mutex is a way to lock down a variable such that it can only be accessed by one thread at a time. This basic concept is incredibly useful for applications that don't just run on one thread, as it guarantees that whoever has locked the mutex can both read and write freely to the variable inside, until it releases the lock. However, this can lead to some fierce competition from other threads if they are all trying to read or write to this variable at the same time. Eventually, they'll end up waiting in a queue to get access, and the application can grind to a halt.

Read/Write Locks (RwLock)

For applications that may read a variable lots at a time, but only write to the variable occasionally, your next best bet is a concept called a Read/Write Lock (RwLock). It's similar to a Mutex in that, if you wish to write to the variable, you need to lock it down and release it when you're finished. However, this method allows any number of threads to come along and get read access simultaneously.

A RwLock is almost perfect for music software, as the GUI thread will get write access once every time the screen refreshes, or if anything in the application is changed, meanwhile the audio thread may be requesting hundreds of reads every second. However, there may still be edge cases where a read and write are happening at the same time, which could cause the application to pause for just a split second - effectively leading to stuttering in the audio stream - so we can do one better.

Read-Copy-Update (RCU)

A slightly newer concept in the world of multi-threading (well, it's from the 90's - but that's still relatively new in comparison!) is RCU. It's very similar to an RwLock, except write locks now allow you to read simultaneously. Instead of locking the variable directly and preventing read access, the lock will effectively make a new copy of the existing variable while the other threads are reading it, make any changes it needs (to the new copy), and once everybody's finished reading the old copy, the old copy will get destroyed and any new readers will see the new copy. It's important to note that the readers and writers of this variable are completely oblivious to all of this happening - they see the variable as they would if they used a RwLock! The main difference here is the process going on underneath, allowing them to read and write at the same time.

Using all that in context

That's a lot of information to take in at once, so while you don't particularly need to understand how it all works underneath (yet - you'll want to one day, I'm sure!), it is important to know that there are different methods. For our audio thread, we'll want to use RCU if our programming environment allows, due to the read and writes potentially happening simultaneously.

So - you've started the application and a "project" is created and converted into a RCU variable. What do you do now? After the GUI has started, I'd begin by creating a separate thread which talks to your headphones or speakers (taking a copy of the project RCU with you), and when the audio output begins, use aaaall the project's information to create sound, including audio samples, synthesizers, MIDI events to begin and end notes, and the list goes on. That's a whole other topic, which we'll probably go very in-depth into one day - but at the very least, now you can read and write to a variable across two threads.

Further Reading

To understand these concepts more from a general computer science level, the Wikipedia articles go into more detail but use lower-level language, which may be slightly more difficult to understand at first:

If you're writing Rust as I am, I'd recommend Mara Bos' book on atomics and locks. At the time of writing I've only skimmed it from a surface level, but it's already had rave reviews, and I'm very much looking forward to learning in-depth memory management in the context of low-level concurrency. Conveniently for me, it was released right around the time I started getting interested in this as a topic!